isaac_ros_dope#

Source code available on GitHub.

Quickstart#

Warning

This package requires a model preparation process that differs significantly from those of other Isaac ROS packages.

Running this quickstart requires a conversion step that must be performed on an x86_64 system.

It is not possible to complete this tutorial using only a Jetson device.

To run on Jetson, first complete the tutorial on x86_64.

Then, manually copy the converted model from the x86_64 host to the Jetson and repeat the tutorial on the Jetson.

Set Up Development Environment#

Set up your development environment by following the instructions in getting started.

(Optional) Install dependencies for any sensors you want to use by following the sensor-specific guides.

Note

We strongly recommend installing all sensor dependencies before starting any quickstarts. Some sensor dependencies require restarting the development environment during installation, which will interrupt the quickstart process.

Download Quickstart Assets#

Download quickstart data from NGC:

Make sure required libraries are installed.

sudo apt-get install -y curl jq tar

Then, run these commands to download the asset from NGC:

NGC_ORG="nvidia" NGC_TEAM="isaac" PACKAGE_NAME="isaac_ros_dope" NGC_RESOURCE="isaac_ros_dope_assets" NGC_FILENAME="quickstart.tar.gz" MAJOR_VERSION=4 MINOR_VERSION=4 VERSION_REQ_URL="https://catalog.ngc.nvidia.com/api/resources/versions?orgName=$NGC_ORG&teamName=$NGC_TEAM&name=$NGC_RESOURCE&isPublic=true&pageNumber=0&pageSize=100&sortOrder=CREATED_DATE_DESC" AVAILABLE_VERSIONS=$(curl -s \ -H "Accept: application/json" "$VERSION_REQ_URL") LATEST_VERSION_ID=$(echo $AVAILABLE_VERSIONS | jq -r " .recipeVersions[] | .versionId as \$v | \$v | select(test(\"^\\\\d+\\\\.\\\\d+\\\\.\\\\d+$\")) | split(\".\") | {major: .[0]|tonumber, minor: .[1]|tonumber, patch: .[2]|tonumber} | select(.major == $MAJOR_VERSION and .minor <= $MINOR_VERSION) | \$v " | sort -V | tail -n 1 ) if [ -z "$LATEST_VERSION_ID" ]; then echo "No corresponding version found for Isaac ROS $MAJOR_VERSION.$MINOR_VERSION" echo "Found versions:" echo $AVAILABLE_VERSIONS | jq -r '.recipeVersions[].versionId' else mkdir -p ${ISAAC_ROS_WS}/isaac_ros_assets && \ FILE_REQ_URL="https://api.ngc.nvidia.com/v2/resources/$NGC_ORG/$NGC_TEAM/$NGC_RESOURCE/\ versions/$LATEST_VERSION_ID/files/$NGC_FILENAME" && \ curl -LO --request GET "${FILE_REQ_URL}" && \ tar -xf ${NGC_FILENAME} -C ${ISAAC_ROS_WS}/isaac_ros_assets && \ rm ${NGC_FILENAME} fi

Download the

Ketchup.pthDOPE model from the official DOPE GitHub repository’s model collection available here.Move this file to

${ISAAC_ROS_WS}/isaac_ros_assets/models/dope.For example, if the model was downloaded to

~/Downloads:mkdir -p ${ISAAC_ROS_WS}/isaac_ros_assets/models/dope/ && \ mv ~/Downloads/Ketchup.pth ${ISAAC_ROS_WS}/isaac_ros_assets/models/dope

Build isaac_ros_dope#

Activate the Isaac ROS environment:

isaac-ros activateInstall the prebuilt Debian package:

sudo apt-get update

sudo apt-get install -y ros-jazzy-isaac-ros-dope

Install Git LFS:

sudo apt-get install -y git-lfs && git lfs install

Clone this repository under

${ISAAC_ROS_WS}/src:cd ${ISAAC_ROS_WS}/src && \ git clone -b release-4.4 https://github.com/NVIDIA-ISAAC-ROS/isaac_ros_pose_estimation.git isaac_ros_pose_estimation

Activate the Isaac ROS environment:

isaac-ros activateUse

rosdepto install the package’s dependencies:sudo apt-get update

rosdep update && rosdep install --from-paths ${ISAAC_ROS_WS}/src/isaac_ros_pose_estimation/isaac_ros_dope --ignore-src -y

Build the package from source:

cd ${ISAAC_ROS_WS} && \ colcon build --symlink-install --packages-up-to isaac_ros_dope --base-paths ${ISAAC_ROS_WS}/src/isaac_ros_pose_estimation/isaac_ros_dope

Source the ROS workspace:

Note

Make sure to repeat this step in every terminal created inside the Isaac ROS environment.

Because this package was built from source, the enclosing workspace must be sourced for ROS to be able to find the package’s contents.

source install/setup.bash

Run Launch File#

Continuing inside the Isaac ROS environment, convert the PyTorch model (

.pth) to a general ONNX model (.onnx):Warning

This step must be performed on

x86_64. If you intend to run the model on a Jetson, you must first convert the model on anx86_64system, and then copy the output file to the corresponding location on the Jetson (${ISAAC_ROS_WS}/isaac_ros_assets/models/dope/Ketchup.onnx)First install

torchvision:pip3 install --break-system-packages --no-deps --index-url https://download.pytorch.org/whl/cu130 torchvision==0.24.0+cu130 || \ pip3 install --break-system-packages --no-deps --index-url https://download.pytorch.org/whl/cu130 torchvision==0.24.0

Now convert the model:

ros2 run isaac_ros_dope dope_converter.py --format onnx \ --input ${ISAAC_ROS_WS}/isaac_ros_assets/models/dope/Ketchup.pth --output ${ISAAC_ROS_WS}/isaac_ros_assets/models/dope/Ketchup.onnx --row 720 --col 1280

Continuing inside the Isaac ROS environment, install the following dependencies:

sudo apt-get update

sudo apt-get install -y ros-jazzy-isaac-ros-examples

Run the following launch file to spin up a demo of this package using the quickstart rosbag:

ros2 launch isaac_ros_examples isaac_ros_examples.launch.py launch_fragments:=dope interface_specs_file:=${ISAAC_ROS_WS}/isaac_ros_assets/isaac_ros_dope/quickstart_interface_specs.json \ model_file_path:=${ISAAC_ROS_WS}/isaac_ros_assets/models/dope/Ketchup.onnx engine_file_path:=${ISAAC_ROS_WS}/isaac_ros_assets/models/dope/Ketchup.plan

Open a second terminal inside the Isaac ROS environment:

isaac-ros activateRun the rosbag file to simulate an image stream:

ros2 bag play -l ${ISAAC_ROS_WS}/isaac_ros_assets/isaac_ros_dope/quickstart.bag

Ensure that you have already set up your RealSense camera using the RealSense setup tutorial. If you have not, set up the sensor and then restart this quickstart from the beginning.

Continuing inside the Isaac ROS environment, install the following dependencies:

sudo apt-get update

sudo apt-get install -y ros-jazzy-isaac-ros-examples ros-jazzy-isaac-ros-realsense

Run the following launch file to spin up a demo of this package using a RealSense camera:

ros2 launch isaac_ros_examples isaac_ros_examples.launch.py launch_fragments:=realsense_mono_rect,dope \ model_file_path:=${ISAAC_ROS_WS}/isaac_ros_assets/models/dope/Ketchup.onnx engine_file_path:=${ISAAC_ROS_WS}/isaac_ros_assets/models/dope/Ketchup.plan

Visualize Results#

Open a new terminal inside the Isaac ROS environment:

isaac-ros activateInstall RViz and the

vision_msgsRViz plugin:sudo apt-get install -y ros-jazzy-rviz2 ros-jazzy-vision-msgs-rviz-plugins source /opt/ros/jazzy/setup.bash

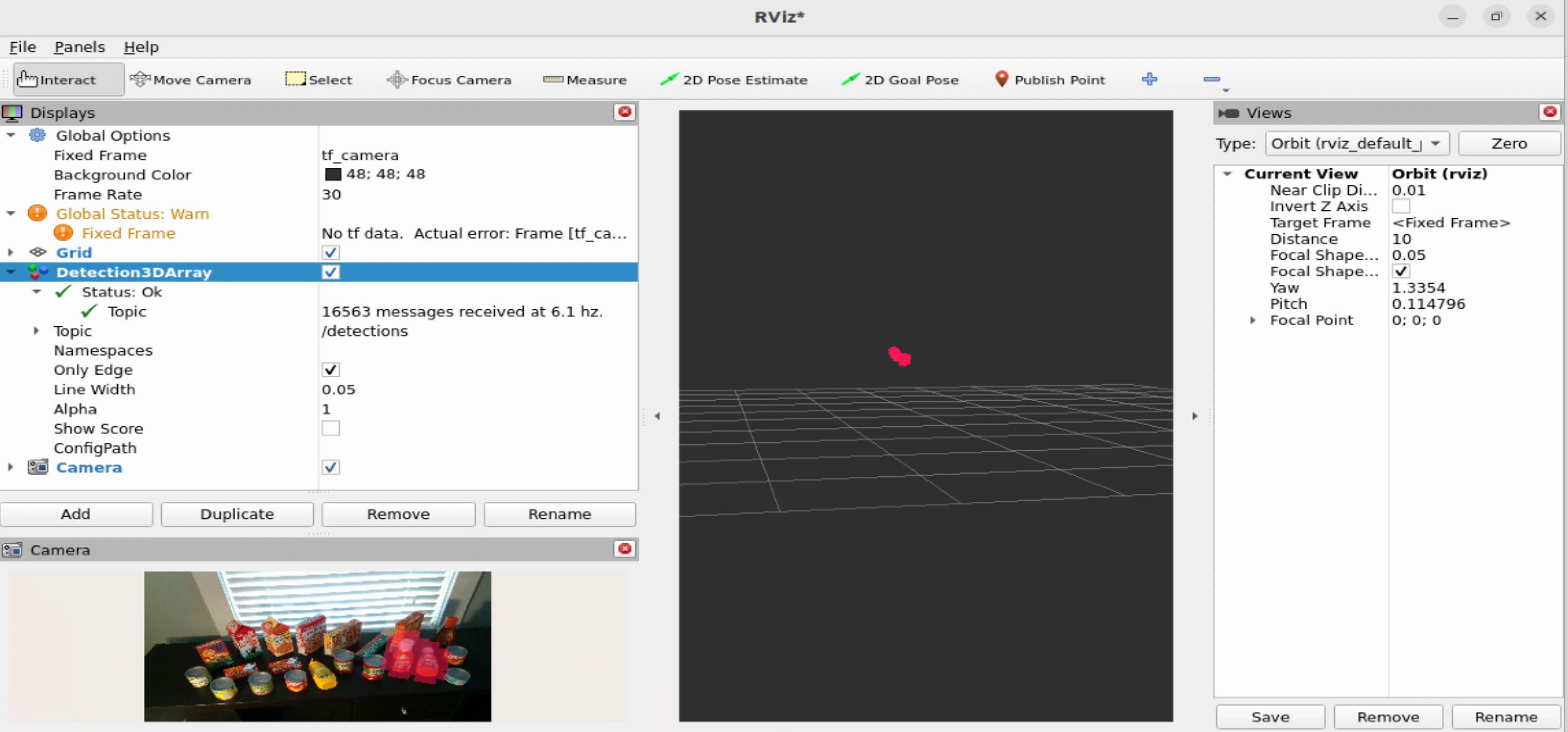

Visualize the detection3d array in RViz2:

rviz2

Make sure to update the

Fixed Frametotf_camera.Make sure to update the

Fixed Frametocamera_color_optical_frame.Then click on the

Addbutton, selectBy display typeand chooseDetection3DArrayundervision_msgs_rviz_plugins. Expand the Detection3DArray display and change the topic to/detections. Check theOnly Edgeoption. Then click on theAddbutton again and selectBy Topic. Under/dope_encoder, expand the/resizedrop-down, select/image, and click theCameraoption to see the image with the bounding box over detected objects. Refer to the picture below.

Try More Examples#

To continue your exploration, check out the following suggested examples:

Note

For best results, always crop or resize input images to the same dimensions your DOPE model is expecting.

Use Different Models#

Click here for more information on how to use NGC models.

Alternatively, consult the DOPE model repository to try other models.

Model Name |

Use Case |

|---|---|

The DOPE model repository. This should be used if |

Troubleshooting#

Isaac ROS Troubleshooting#

For solutions to problems with Isaac ROS, review here.

Deep Learning Troubleshooting#

For solutions to problems with using DNN models, review here.

API#

Usage#

Two launch files are provided to use this package. The first launch file launches isaac_ros_tensor_rt, whereas the other one uses isaac_ros_triton, along with

the necessary components to perform encoding on images and decoding of the DOPE network’s output.

Warning

For your specific application, these launch files may need to be modified. Consult the available components to see the configurable parameters.

Launch File |

Components Used |

|---|---|

|

|

|

Warning

There is also a config file that should be modified in

isaac_ros_dope/config/dope_config.yaml.

DopeDecoderNode#

ROS Parameters#

ROS Parameter |

Type |

Default |

Description |

|---|---|---|---|

|

|

|

The name of the configuration file to parse. Note: The node will look for that file name under |

|

|

|

The object class the DOPE network is detecting and the DOPE decoder is interpreting. This name should be listed in the configuration file along with its corresponding cuboid dimensions. |

|

|

|

Whether the DOPE Decoder Node will broadcast poses to the TF Tree. |

|

|

|

The minimum value of a peak in a DOPE belief map. |

|

|

|

Rotation along Y axis in degrees |

|

|

|

Rotation along X axis in degrees |

|

|

|

Rotation along Z axis in degrees |

Configuration File#

The DOPE configuration file, which can be found at isaac_ros_dope/config/dope_config.yaml may need to modified. Specifically, you will need to specify an object type in the DopeDecoderNode that is listed in the dope_config.yaml file. The DOPE decoder node will pick the right parameters to transform the belief maps from the inference node to object poses.

Note

The object_name should correspond to one of the objects listed in the DOPE configuration file, with the corresponding model used.

Note

The parameters for rotation can be used to specify a rotation of the pose output by the network, it is useful when one would like to align detections between Foundation Pose and Dope. For example, for the soup can asset, the rotation would need to done along the y axis by 90 degrees. All rotation values here are in degrees. The rotation is performed in a ZYX sequence.

ROS Topics Subscribed#

ROS Topic |

Interface |

Description |

|---|---|---|

|

The tensor that represents the belief maps, which are outputs from the DOPE network. |

|

|

The input image camera_info. |

ROS Topics Published#

ROS Topic |

Interface |

Description |

|---|---|---|

|

An array of detections found by DOPE network and outputted by DOPE Decoder Node. Each detection specifies pose and bounding box dimensions. |

Warning

The DOPE network outputs one pose for each instance of an object.

As such, the ObjectHypothesis[] results attribute for each element

in the Detection3DArray has only one element that includes

the object pose, but does not specify the `score.