Isaac ROS Image Segmentation#

Overview#

Isaac ROS Image Segmentation contains ROS packages for semantic image segmentation.

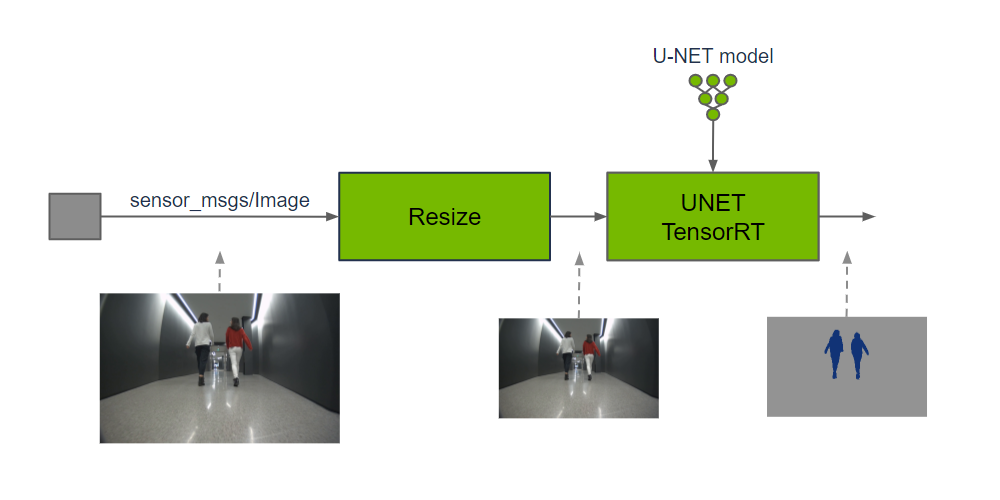

These packages provide methods for classification of an input image at the pixel level by running GPU-accelerated inference on a DNN model. Each pixel of the input image is predicted to belong to a set of defined classes. The output prediction can be used by perception functions to understand where each class is spatially in a 2D image or fuse with a corresponding depth location in a 3D scene.

Package |

Model Architecture |

Description |

|---|---|---|

Convolutional network popular for biomedical imaging segmentation models |

||

Transformer-based network that works well for objects of varying scale |

||

Segments any object in an image when given a prompt as to which one |

||

Segments and tracks any object in a video stream when given a prompt as to which one |

Input images may need to be cropped and resized to maintain the aspect ratio and match the input resolution expected by the DNN model; image resolution may be reduced to improve DNN inference performance, which typically scales directly with the number of pixels in the image.

Image segmentation provides more information and uses more compute than object detection to produce classifications per pixel, whereas object detection classifies a simpler bounding box rectangle in image coordinates. Object detection is used to know if, and where spatially in a 2D image, the object exists. On the other hand, image segmentation is used to know which pixels belong to the class. One application is using the segmentation result, and fusing it with the corresponding depth information in order to know an object location in a 3D scene.

Quickstarts#

Isaac ROS NITROS Acceleration#

This package is powered by NVIDIA Isaac Transport for ROS (NITROS), which leverages type adaptation and negotiation to optimize message formats and dramatically accelerate communication between participating nodes.

DNN models#

Each image segmentation package relies on a pre-trained DNN model. You can train your own models or download pre-trained models from one of the many model zoo’s available online or from NGC which provides pre-trained models for use in your robotics application.

NGC pre-trained models can be fine-tuned for your application using TAO used in the examples provided. See here for more information on how to use various models from NVIDIA hosted on NGC.

U-NET#

A U-NET model is required to use isaac_ros_unet.

PeopleSemSegNet provides a pre-trained model for best-in-class, real-time people segmentation.

This package has been validated against the following models:

Model Name |

Use Case |

|---|---|

Segment people from point of view of a mobile robot |

|

Semantically segment people inference at a high speed |

|

Semantically segment people |

Segformer#

A Segformer model is required to use isaac_ros_segformer.

PeopleSemSegFormer provides a pre-trained model for more effective real-time people segmentation.

This package has been validated against the following models:

Model Name |

Use Case |

|---|---|

Semantically segment people |

Segment Anything#

The Segment Anything (SAM) model is required to use isaac_ros_segment_anything.

This model requires an additional prompt value to indicate what object in the image should be segmented.

This package has been validated against the following versions of SAM:

Model Name |

Description |

|---|---|

Full accuracy model that can consume over 8GB of GPU memory and require more computational power |

|

Slimmer variant of SAM optimized for a smaller memory and compute footprint. |

Segment Anything2#

The Segment Anything2 (SAM2) model is required to use isaac_ros_segment_anything2.

This model requires an additional prompt value to indicate what object in the video stream should be segmented and tracked.

This package has been validated against the following versions of SAM2:

Model Name |

Description |

|---|---|

Segment and track any object in a video stream |

Packages#

Supported Platforms#

This package is designed and tested to be compatible with ROS 2 Jazzy running on Jetson, an x86_64 system with an NVIDIA GPU, or a DGX Spark workstation. Other GB10 based platforms may function, but are not part of the test matrix and we cannot guarantee their behavior.

Platform |

Hardware |

Software |

Storage |

Notes |

|---|---|---|---|---|

Jetson |

128+ GB NVMe SSD |

For best performance, ensure that power settings are configured appropriately. |

||

x86_64 |

|

32+ GB disk space available |

||

DGX |

32+ GB disk space available |

For best performance, use the supplied power adapter with the DGX Spark system. |

Isaac ROS Environment#

To simplify development, we strongly recommend leveraging the Isaac ROS CLI by following these steps. This streamlines your development environment setup with the correct versions of dependencies on all supported platforms.

Note

All Isaac ROS Quickstarts, tutorials, and examples have been designed with the Isaac ROS CLI-managed environment as a prerequisite.

Customize your Dev Environment#

To customize your development environment, refer to this guide.

Updates#

Date |

Changes |

|---|---|

2026-04-30 |

Compatibility and integration updates for the Isaac ROS 4.4.0 release |

2026-03-23 |

Introduced early-stage support for SIPL camera framework and LI Eagle stereo CoE/HSB camera with ROS |

2026-02-19 |

Support for DGX Spark and JetPack 7.1 |

2026-02-02 |

Support for two new Docker-optional development and deployment modes |

2025-10-24 |

Add Segment Anything2 package |

2024-12-10 |

Update to be compatible with JetPack 6.1 |

2024-09-26 |

Update for ZED compatibility |

2024-05-30 |

Add SegFormer and Segment Anything packages |

2023-10-18 |

Updated for Isaac ROS 2.0.0. |

2023-05-25 |

Performance improvements |

2023-04-05 |

Source available GXF extensions |

2022-10-19 |

Updated OSS licensing |

2022-08-31 |

Update to be compatible with JetPack 5.0.2 |

2022-06-30 |

Removed frame_id, queue_size and tensor_output_order parameter. Added network_output_type parameter (support for sigmoid and argmax output layers). Switched implementation to use NITROS. Removed support for odd sized images. Switched tutorial to use PeopleSemSegNet ShuffleSeg and moved unnecessary details to other READMEs. |

2021-11-15 |

Isaac Sim HIL documentation update |

2021-10-20 |

Initial release |