Tutorial: Camera-based 3D Perception with Isaac Perceptor on the Nova Carter

This demo enables you to use the Isaac Perceptor to perceive the robot’s environment - purely based on cameras. Isaac Perceptor uses Isaac ROS components, including Visual SLAM to localize the robot, ESS and Nvblox to reconstruct 3D environments.

For this tutorial, it is assumed that you have successfully completed the teleop tutorial and the extrinsic calibration instructions.

Running the Application

SSH into the robot (instructions).

Make sure you have successfully connected the PS5 joystick to the robot (instructions).

Build/install the required packages and run the app:

Pull the Docker image:

docker pull nvcr.io/nvidia/isaac/nova_carter_bringup:release_3.2-aarch64

Run the Docker image:

docker run --privileged --network host \ -v /dev/*:/dev/* \ -v /tmp/argus_socket:/tmp/argus_socket \ -v /etc/nova:/etc/nova \ nvcr.io/nvidia/isaac/nova_carter_bringup:release_3.2-aarch64 \ ros2 launch nova_carter_bringup perceptor.launch.py1. Make sure you followed the Prerequisites and you are inside the Isaac ROS Docker container.

Install the required Debian packages:

sudo apt update sudo apt-get install -y ros-humble-nova-carter-bringup source /opt/ros/humble/setup.bash

Install the required assets:

sudo apt-get install -y ros-humble-isaac-ros-ess-models-install ros-humble-isaac-ros-peoplesemseg-models-install source /opt/ros/humble/setup.bash ros2 run isaac_ros_ess_models_install install_ess_models.sh --eula ros2 run isaac_ros_peoplesemseg_models_install install_peoplesemsegnet_vanilla.sh --eula ros2 run isaac_ros_peoplesemseg_models_install install_peoplesemsegnet_shuffleseg.sh --eula4. Declare

ROS_DOMAIN_IDwith the same unique ID (number between 0 and 101) on every bash instance inside the Docker container:export ROS_DOMAIN_ID=<unique ID>

Run the file:

ros2 launch nova_carter_bringup perceptor.launch.py1. Make sure you followed the Prerequisites and you are inside the Isaac ROS Docker container.

Use

rosdepto install the package’s dependencies:sudo apt update rosdep update rosdep install -i -r --from-paths ${ISAAC_ROS_WS}/src/nova_carter/nova_carter_bringup/ \ --rosdistro humble -y

Install the required assets:

sudo apt-get install -y ros-humble-isaac-ros-ess-models-install ros-humble-isaac-ros-peoplesemseg-models-install source /opt/ros/humble/setup.bash ros2 run isaac_ros_ess_models_install install_ess_models.sh --eula ros2 run isaac_ros_peoplesemseg_models_install install_peoplesemsegnet_vanilla.sh --eula ros2 run isaac_ros_peoplesemseg_models_install install_peoplesemsegnet_shuffleseg.sh --eula

Build the ROS package in the Docker container:

colcon build --symlink-install --packages-up-to nova_carter_bringup source install/setup.bash5. Declare

ROS_DOMAIN_IDwith the same unique ID (number between 0 and 101) on every bash instance inside the Docker container:export ROS_DOMAIN_ID=<unique ID>

Run the file:

ros2 launch nova_carter_bringup perceptor.launch.py

You are now able to remote control the robot with the gamepad. Follow the next steps to additionally visualize the sensor outputs in Foxglove.

Customizing Sensor Configurations

By default, as specified in the launch file perceptor.launch.py, 3 stereo

cameras available on the Nova Carter (front, left, right) are used in

Isaac Perceptor algorithms.

You may use the stereo_camera_configuration launch argument to customize camera

configurations when running Isaac Perceptor.

For example, to use only front stereo camera for 3D reconstruction and visual SLAM, you could run the following launch command:

ros2 launch nova_carter_bringup perceptor.launch.py \

stereo_camera_configuration:=front_configuration

For example, to use only front stereo camera for 3D reconstruction, visual SLAM and people reconstruction, you could run the following launch command:

ros2 launch nova_carter_bringup perceptor.launch.py \

stereo_camera_configuration:=front_people_configuration

For a detailed description of all available configurations refer to the Tutorial: Stereo Camera Configurations for Isaac Perceptor.

Mapping and Localization

In order to create maps and localize, refer to Tutorial: Mapping and Localization with Isaac Perceptor.

Visualizing the Outputs

Make sure you complete Visualization Setup. This is required to visualize the Isaac nvblox mesh in a recommended layout configuration.

Open the Foxglove studio on your remote machine.

If you are not running a configuration with people reconstruction, open the

nova_carter_perceptor.jsonlayout file downloaded in the previous step.If you are running a configuration with people reconstruction, open the

nova_carter_perceptor_with_people.jsonlayout file downloaded in the previous step.

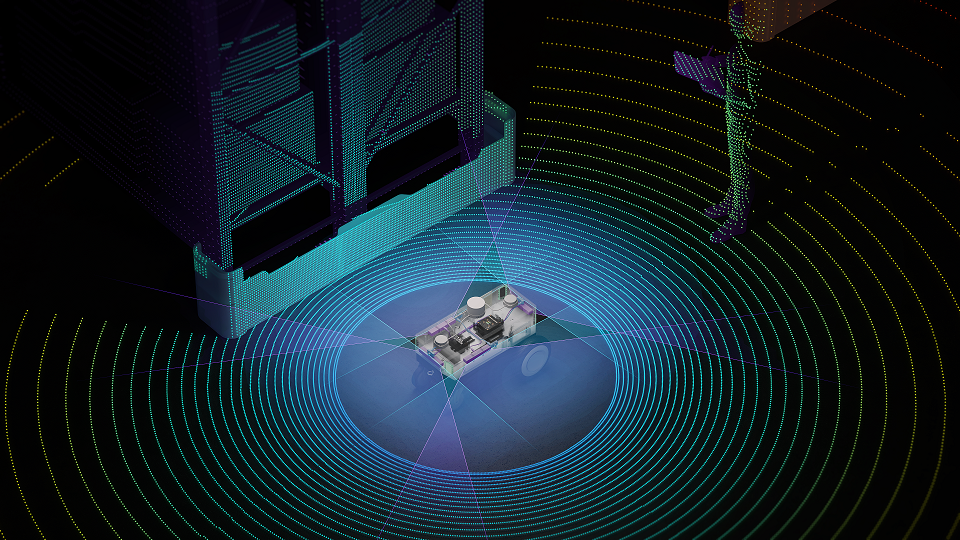

In Foxglove, it shows a visualization of the Nova Carter robot and Isaac nvblox mesh visualization of surrounding environments. Verify that you see a visualization similar to the image below. In the mesh, it shows the reconstructed colored voxels, the computed distance map from Isaac nvblox outputs. The colored voxels are uniformly reconstructed with a resolution of 5cm. The rainbow color spectrum reflects the proximity of each region to nearest obstacles. Regions closer to obstacle surfaces are marked in warmer colors (red, orange), while regions further away from obstacle surfaces are marked in cooler colors (blue, violet).

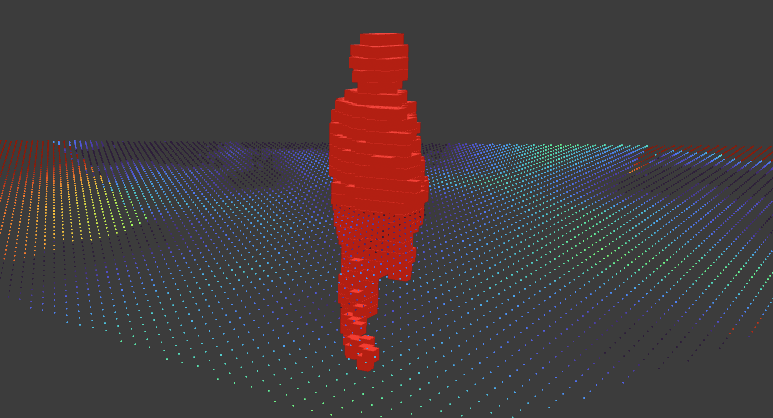

If running a configuration with people reconstruction, in addition, you can see highlighted red voxels shown in the reconstructed mesh. It visualizes people perceived in the field of view of one or more cameras. In the default

nova_carter_perceptor_with_people.jsonlayout file, only/nvblox_node/dynamic_occupancy_layeris visualized and shown as the red voxels.

Note

For a better visualization experience, some topics requiring a large bandwidth are not available

to Foxglove studio. You can set use_foxglove_whitelist:=False as additional argument

when running the app. Most likely the image stream will be fairly choppy given its large

bandwidth. To learn more about topics published by Isaac nvblox, you can refer to

nvblox ROS messages..

For topics published by Isaac visual SLAM, you can refer to

cuvslam ROS messages..

Evaluating Isaac Perceptor

Follow these instructions to assert that Isaac Perceptor is performing as expected.

Isaac nvblox continuously measures computation efficiency metrics and will report them in the terminal on request. To turn on rate output change the following configurations from

falsetotruein the file$ISAAC_ROS_WS/src/isaac_perceptor/isaac_ros_perceptor_bringup/params/nvblox_perceptor.yaml:print_rates_to_console: true print_delays_to_console: true

Verify that you see metrics similar to the following:

nvblox Ratesreports how the frequencies of specific events happening in Nvblox. For the 3-camera configuration, you are expected to seeros/depth_image_callbackat 60Hz, andros/color_image_callbackat 90Hz. For the 1-camera configuration, you are expected to seeros/depth_image_callbackat 30Hz, andros/color_image_callbackat 30Hz.

nvblox Rates (in Hz) namespace/tag - NumSamples (Window Length) - Mean ----------- ros/color 100 13.2 ros/depth 100 60.4 ros/depth_image_callback 100 60.8 ros/color_image_callback 100 90.8 ros/update_esdf 100 7.2 mapper/stream_mesh 100 4.5 ros/tick 100 16.7 -----------

nvblox Delaysreports the average compute time a node takes in second based on a certain number of samples. You are expected to seeros/esdf_integrationat 100 ~ 200 ms.

nvblox Delays namespace/tag - NumSamples (Window Length) - Mean Delay (seconds) ----------- ros/esdf_integration 100 0.174 ros/depth_image_integration 100 0.152 ros/color_image_integration 100 0.129 ros/depth_image_callback 100 0.106 ros/color_image_callback 100 0.068 -----------

To further evaluate the quality of Isaac Perceptor, you can perform the following tests.

Choose objects above 10cm with various heights, and place them in front of any camera specified in the

stereo_camera_configurationlaunch argument. Vary the distance to the camera (e.g. 1m, 3m, 5m, 7m). Verify that you see the object in the distance map visualization. Additionally, you may also use the Measure Tool in the top panel to measure the distance between the object surface and the camera center.For instance, a pallet at the height of 10cm and a box at the height of 50cm are placed in front of the front stereo camera. We vary its distance to the robot at 0.4m, 1.0m, 3.0m, 5.0m. In the 3D view, you could see colored voxels visualizing the pallet and the box (illustrated by bounding boxes in green and blue). The proximity value measured by Measure Tool shows the distance between the front camera and perceived objects. You are expected to obtain similar proximity value with the distance you place objects at.

You are advised to follow Technical Details to understand how scene reconstruction works.

Ensure Nova Carter is stationary. You are expected to see no drift from the Nova Carter robot visualization with reference to the odometry frame. You are advised to follow cuVSLAM to understand how Visual SLAM works.

Running Isaac Perceptor on Recorded Data

Apart from running camera-based perception on Nova Carter robot, see the Tutorial: Recording and Playing Back Data for Isaac Perceptor on how to record data on the Nova Carter robot and running Isaac Perceptor on the recorded data.