Isaac ROS Pose Estimation#

Pose estimation and tracking using FoundationPose in a difficult scene with camera motion blur, round objects with few features, reflective object material, and light reflections#

Overview#

Isaac ROS Pose Estimation contains three ROS 2 packages to predict the pose of an object. Refer the following table to see the differences of them:

Node |

Novel Object wo/ Retraining |

TAO Support |

Speed |

Quality |

Maturity |

|---|---|---|---|---|---|

|

✓ |

N/A |

Fast |

Best |

New |

|

x |

x |

Fastest |

Good |

Time-tested |

|

x |

✓ |

Faster |

Better |

Established |

Those packages use GPU acceleration for DNN inference to estimate the pose of an object. The output prediction can be used by perception functions when fusing with the corresponding depth to provide the 3D pose of an object and distance for navigation or manipulation.

isaac_ros_foundationpose is used in a graph of nodes to estimate the pose of

a novel object using 3D bounding cuboid dimensions. It’s developed on top of

FoundationPose model, which is

a pre-trained deep learning model developed by NVLabs. FoundationPose

is capable for both pose estimation and tracking on unseen objects without requiring fine-tuning,

and its accuracy outperforms existing state-of-art methods.

FoundationPose comprises two distinct models: the refine model and the score model. The refine model processes initial pose hypotheses, iteratively refining them, then passes these refined hypotheses to the score model, which selects and finalizes the pose estimation. Additionally, the refine model can serve for tracking, that updates the pose estimation based on new image inputs and the previous frame’s pose estimate. This tracking process is more efficient compared to pose estimation, which speeds exceeding 120 FPS on the Jetson Thor platform.

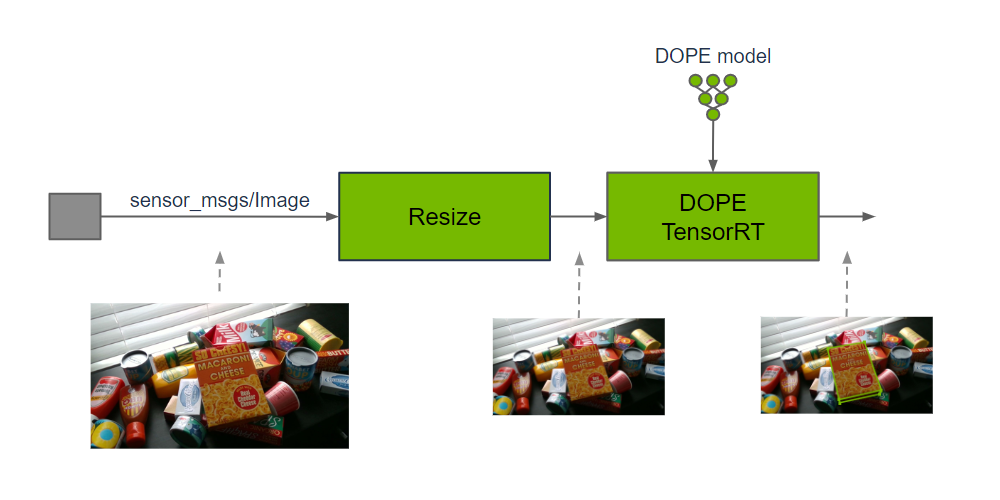

isaac_ros_dope is used in a graph of nodes to estimate the pose of a

known object with 3D bounding cuboid dimensions. To produce the

estimate, a DOPE (Deep

Object Pose Estimation) pre-trained model is required. Input images may

need to be cropped and resized to maintain the aspect ratio and match

the input resolution of DOPE. After DNN inference has produced an estimate, the

DNN decoder will use the specified object type, along with the belief

maps produced by model inference, to output object poses.

NVLabs has provided a DOPE pre-trained model using the

HOPE dataset. HOPE stands

for Household Objects for Pose Estimation. HOPE is a research-oriented

dataset that uses toy grocery objects and 3D textured meshes of the objects

for training on synthetic data. To use DOPE for other objects that are

relevant to your application, the model needs to be trained with another

dataset targeting these objects. For example, DOPE has been trained to

detect dollies for use with a mobile robot that navigates under, lifts,

and moves that type of dolly. To train your own DOPE model, refer to the

Training your Own DOPE Model Tutorial.

isaac_ros_centerpose has similarities to isaac_ros_dope in that

both estimate an object pose; however, isaac_ros_centerpose provides

additional functionality. The

CenterPose DNN performs

object detection on the image, generates 2D keypoints for the object,

estimates the 6-DoF pose up to a scale, and regresses relative 3D bounding cuboid

dimensions. This is performed on a known object class without knowing

the instance-for example, a CenterPose model can detect a chair without having trained on

images of that specific chair.

Pose estimation is a compute-intensive task and therefore not performed at the frame rate of an input camera. To make efficient use of resources, object pose is estimated for a single frame and used as an input to navigation. Additional object pose estimates are computed to further refine navigation in progress at a lower frequency than the input rate of a typical camera.

Packages in this repository rely on accelerated DNN model inference

using Triton or

TensorRT from Isaac ROS DNN Inference.

For preprocessing, packages in this rely on the Isaac ROS DNN Image Encoder,

which can also be found at Isaac ROS DNN Inference.

Quickstarts#

Packages#

Supported Platforms#

This package is designed and tested to be compatible with ROS 2 Jazzy running on Jetson, an x86_64 system with an NVIDIA GPU, or a DGX Spark workstation. Other GB10 based platforms may function, but are not part of the test matrix and we cannot guarantee their behavior.

Platform |

Hardware |

Software |

Storage |

Notes |

|---|---|---|---|---|

Jetson |

128+ GB NVMe SSD |

For best performance, ensure that power settings are configured appropriately. |

||

x86_64 |

|

32+ GB disk space available |

||

DGX |

32+ GB disk space available |

For best performance, use the supplied power adapter with the DGX Spark system. |

Isaac ROS Environment#

To simplify development, we strongly recommend leveraging the Isaac ROS CLI by following these steps. This streamlines your development environment setup with the correct versions of dependencies on all supported platforms.

Note

All Isaac ROS Quickstarts, tutorials, and examples have been designed with the Isaac ROS CLI-managed environment as a prerequisite.

Customize your Dev Environment#

To customize your development environment, refer to this guide.

Updates#

Date |

Changes |

|---|---|

2026-04-30 |

Compatibility and integration updates for the Isaac ROS 4.4.0 release |

2026-03-23 |

Introduced early-stage support for SIPL camera framework and LI Eagle stereo CoE/HSB camera with ROS |

2026-02-19 |

Support for DGX Spark and JetPack 7.1 |

2026-02-02 |

Support for two new Docker-optional development and deployment modes |

2025-10-24 |

Added synchronization node tuned for real-time performance and minor FoundationPose model update for TensorRT 10.13 |

2024-12-10 |

Added pose estimate post-processing utilities |

2024-09-26 |

Update for ZED compatibility |

2024-05-30 |

Added FoundationPose pose estimation package |

2023-10-18 |

Adding NITROS CenterPose decoder |

2023-05-25 |

Performance improvements |

2023-04-05 |

Source available GXF extensions |

2022-06-30 |

Update to use NITROS for improved performance and to be compatible with JetPack 5.0.2 |

2022-06-30 |

Refactored README, updated launch file & added |

2021-10-20 |

Initial update |