isaac_ros_unet#

Source code available on GitHub.

Quickstart#

Set Up Development Environment#

Set up your development environment by following the instructions in getting started.

(Optional) Install dependencies for any sensors you want to use by following the sensor-specific guides.

Note

We strongly recommend installing all sensor dependencies before starting any quickstarts. Some sensor dependencies require restarting the development environment during installation, which will interrupt the quickstart process.

Download Quickstart Assets#

Download quickstart data from NGC:

Make sure required libraries are installed.

sudo apt-get install -y curl jq tar

Then, run these commands to download the asset from NGC:

NGC_ORG="nvidia" NGC_TEAM="isaac" PACKAGE_NAME="isaac_ros_unet" NGC_RESOURCE="isaac_ros_unet_assets" NGC_FILENAME="quickstart.tar.gz" MAJOR_VERSION=4 MINOR_VERSION=4 VERSION_REQ_URL="https://catalog.ngc.nvidia.com/api/resources/versions?orgName=$NGC_ORG&teamName=$NGC_TEAM&name=$NGC_RESOURCE&isPublic=true&pageNumber=0&pageSize=100&sortOrder=CREATED_DATE_DESC" AVAILABLE_VERSIONS=$(curl -s \ -H "Accept: application/json" "$VERSION_REQ_URL") LATEST_VERSION_ID=$(echo $AVAILABLE_VERSIONS | jq -r " .recipeVersions[] | .versionId as \$v | \$v | select(test(\"^\\\\d+\\\\.\\\\d+\\\\.\\\\d+$\")) | split(\".\") | {major: .[0]|tonumber, minor: .[1]|tonumber, patch: .[2]|tonumber} | select(.major == $MAJOR_VERSION and .minor <= $MINOR_VERSION) | \$v " | sort -V | tail -n 1 ) if [ -z "$LATEST_VERSION_ID" ]; then echo "No corresponding version found for Isaac ROS $MAJOR_VERSION.$MINOR_VERSION" echo "Found versions:" echo $AVAILABLE_VERSIONS | jq -r '.recipeVersions[].versionId' else mkdir -p ${ISAAC_ROS_WS}/isaac_ros_assets && \ FILE_REQ_URL="https://api.ngc.nvidia.com/v2/resources/$NGC_ORG/$NGC_TEAM/$NGC_RESOURCE/\ versions/$LATEST_VERSION_ID/files/$NGC_FILENAME" && \ curl -LO --request GET "${FILE_REQ_URL}" && \ tar -xf ${NGC_FILENAME} -C ${ISAAC_ROS_WS}/isaac_ros_assets && \ rm ${NGC_FILENAME} fi

Build isaac_ros_unet#

Activate the Isaac ROS environment:

isaac-ros activateInstall the prebuilt Debian package:

sudo apt-get update

sudo apt-get install -y ros-jazzy-isaac-ros-unet

Install Git LFS:

sudo apt-get install -y git-lfs && git lfs install

Clone this repository under

${ISAAC_ROS_WS}/src:cd ${ISAAC_ROS_WS}/src && \ git clone -b release-4.4 https://github.com/NVIDIA-ISAAC-ROS/isaac_ros_image_segmentation.git isaac_ros_image_segmentation

Activate the Isaac ROS environment:

isaac-ros activateUse

rosdepto install the package’s dependencies:sudo apt-get update

rosdep update && rosdep install --from-paths ${ISAAC_ROS_WS}/src/isaac_ros_image_segmentation/isaac_ros_unet --ignore-src -y

Build the package from source:

cd ${ISAAC_ROS_WS} && \ colcon build --symlink-install --packages-up-to isaac_ros_unet --base-paths ${ISAAC_ROS_WS}/src/isaac_ros_image_segmentation/isaac_ros_unet

Source the ROS workspace:

Note

Make sure to repeat this step in every terminal created inside the Isaac ROS environment.

Because this package was built from source, the enclosing workspace must be sourced for ROS to be able to find the package’s contents.

source install/setup.bash

Prepare PeopleSemSegnet Model#

Download and install model assets inside the Isaac ROS environment:

sudo apt-get install -y ros-jazzy-isaac-ros-peoplesemseg-models-install && ros2 run isaac_ros_peoplesemseg_models_install install_peoplesemsegnet_vanilla.sh --eula && ros2 run isaac_ros_peoplesemseg_models_install install_peoplesemsegnet_shuffleseg.sh --eula

Run Launch File#

Continuing inside the Isaac ROS environment, install the following dependencies:

sudo apt-get update

sudo apt-get install -y ros-jazzy-isaac-ros-examples

Run the following launch file to spin up a demo of this package using the quickstart rosbag:

ros2 launch isaac_ros_examples isaac_ros_examples.launch.py launch_fragments:=unet interface_specs_file:=${ISAAC_ROS_WS}/isaac_ros_assets/isaac_ros_unet/quickstart_interface_specs.json engine_file_path:=${ISAAC_ROS_WS}/isaac_ros_assets/models/peoplesemsegnet/deployable_quantized_vanilla_unet_onnx_v2.0/1/model.plan input_binding_names:=['input_1:0']

Open a second terminal inside the Isaac ROS environment:

isaac-ros activateRun the rosbag file to simulate an image stream:

ros2 bag play -l ${ISAAC_ROS_WS}/isaac_ros_assets/isaac_ros_unet/quickstart.bag

Ensure that you have already set up your RealSense camera using the RealSense setup tutorial. If you have not, set up the sensor and then restart this quickstart from the beginning.

Continuing inside the Isaac ROS environment, install the following dependencies:

sudo apt-get update

sudo apt-get install -y ros-jazzy-isaac-ros-examples ros-jazzy-isaac-ros-realsense

Run the following launch file to spin up a demo of this package using a RealSense camera:

ros2 launch isaac_ros_examples isaac_ros_examples.launch.py launch_fragments:=realsense_mono_rect,unet engine_file_path:=${ISAAC_ROS_WS}/isaac_ros_assets/models/peoplesemsegnet/deployable_quantized_vanilla_unet_onnx_v2.0/1/model.plan input_binding_names:=['input_1:0'] output_binding_names:=['argmax_1'] network_output_type:='argmax'

Ensure that you have already set up your ZED camera using ZED setup tutorial.

Continuing inside the Isaac ROS environment, install dependencies:

sudo apt-get update

sudo apt-get install -y ros-jazzy-isaac-ros-examples ros-jazzy-isaac-ros-image-proc ros-jazzy-isaac-ros-zed

Run the following launch file to spin up a demo of this package using a ZED Camera:

ros2 launch isaac_ros_examples isaac_ros_examples.launch.py \ launch_fragments:=zed_mono_rect,unet \ engine_file_path:=${ISAAC_ROS_WS}/isaac_ros_assets/models/peoplesemsegnet/deployable_quantized_vanilla_unet_onnx_v2.0/1/model.plan input_binding_names:=['input_1:0'] output_binding_names:=['argmax_1'] network_output_type:='argmax' \ interface_specs_file:=${ISAAC_ROS_WS}/isaac_ros_assets/isaac_ros_unet/zed2_quickstart_interface_specs.json

Note

If you are using the ZED X series, replace zed2_quickstart_interface_specs.json with zedx_quickstart_interface_specs.json in the above command.

Note

If you want to use the shuffleseg model, replace the engine_file_path with the shuffleseg engine location, set the input_binding_names to ['input_2'], and set use_planar_input to False.

Visualize Results#

Open a new terminal inside the Isaac ROS environment:

isaac-ros activateInstall

rqt_image_view:sudo apt-get install -y ros-jazzy-rqt-image-view

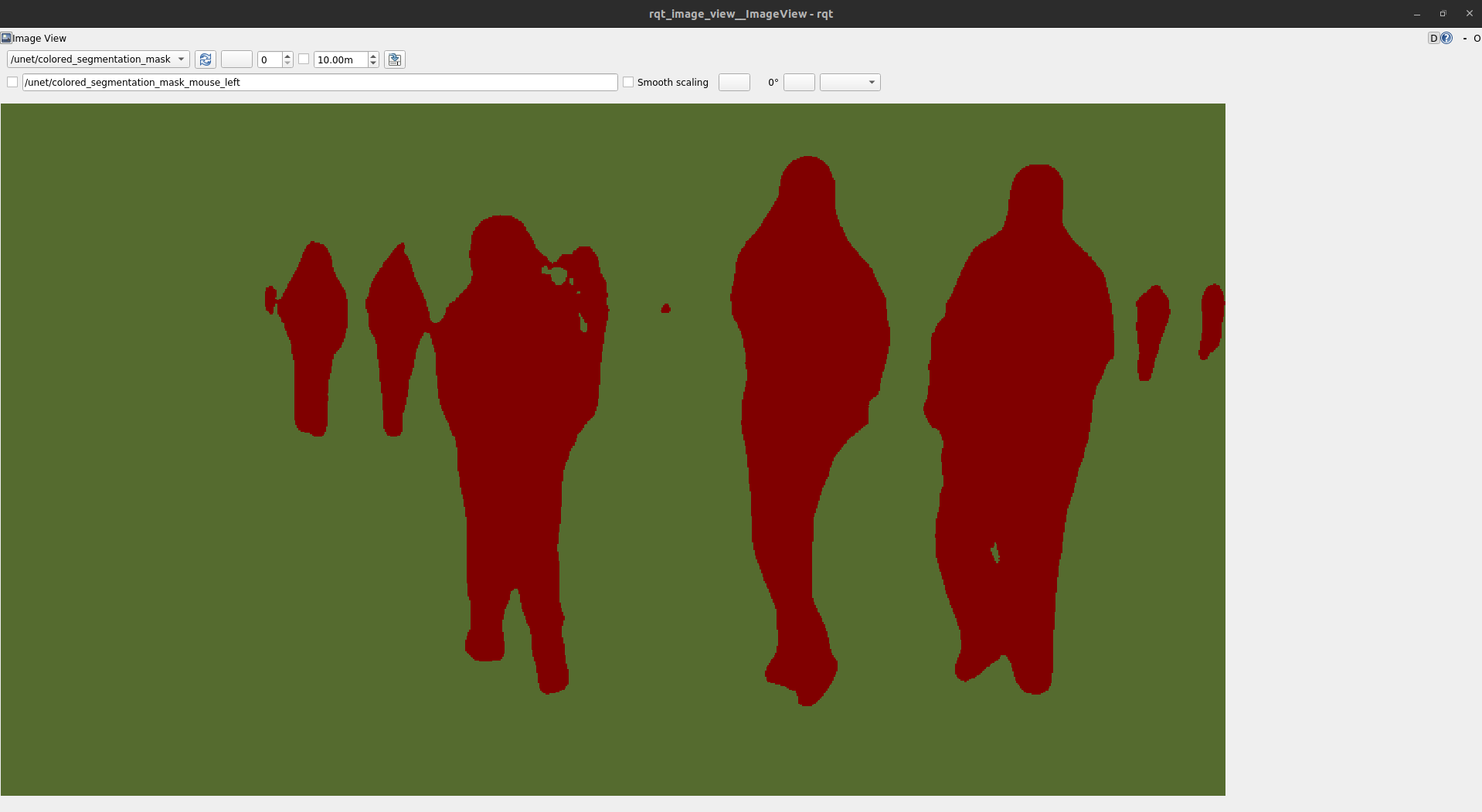

Visualize and validate the output of the package with

rqt_image_view:ros2 run rqt_image_view rqt_image_view /unet/colored_segmentation_mask

After about 1 minute, your output should look like this:

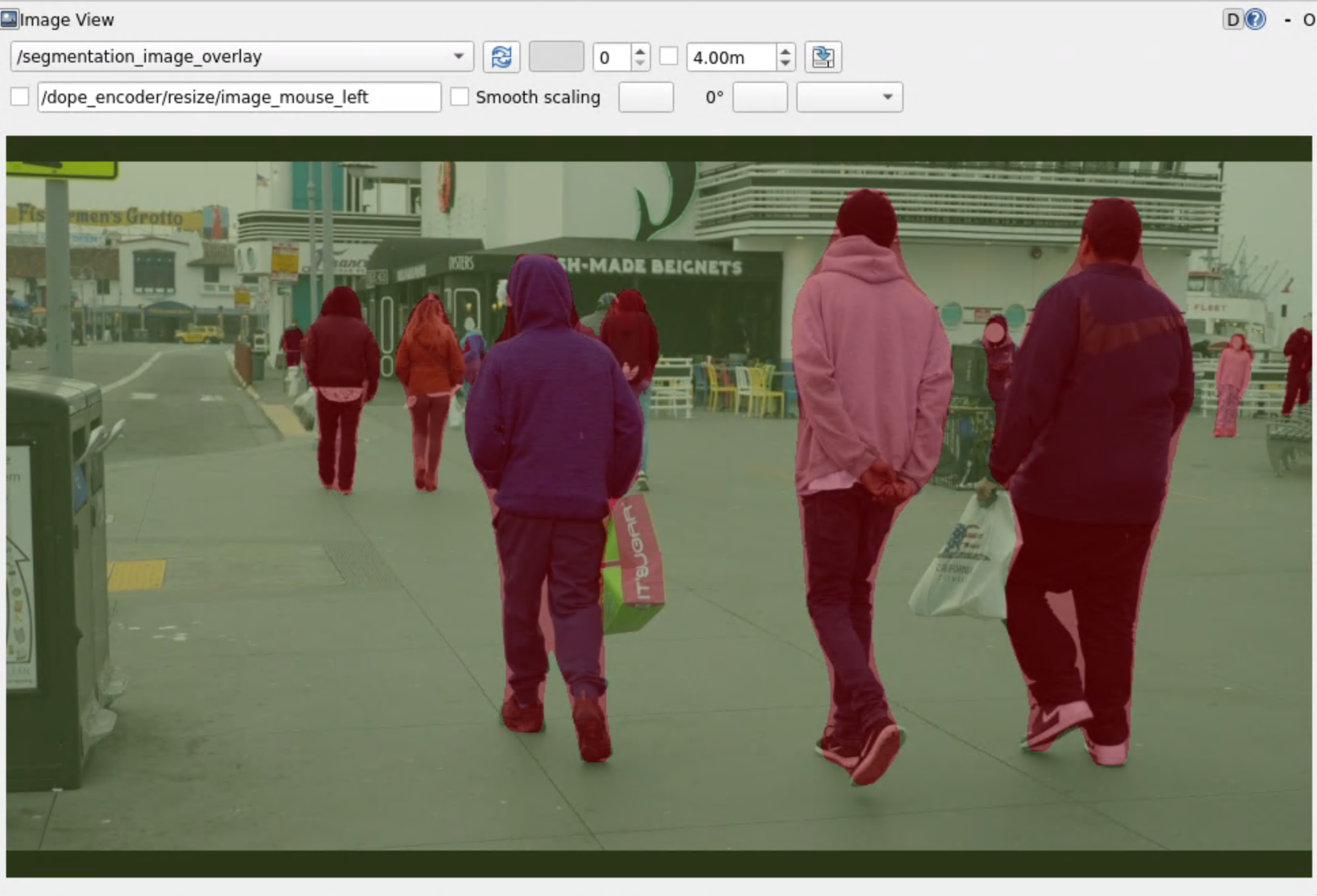

Visualize the blended image with

rqt_image_view:ros2 run rqt_image_view rqt_image_view /segmentation_image_overlay

Your output should look like this:

Try More Examples#

To continue your exploration, check out the following suggested examples:

Troubleshooting#

Isaac ROS Troubleshooting#

For solutions to problems with Isaac ROS, see troubleshooting.

Deep Learning Troubleshooting#

For solutions to problems with using DNN models, see troubleshooting deeplearning.

API#

Usage#

Two launch files are provided to use this package. The first launch file launches isaac_ros_tensor_rt, whereas the other one uses isaac_ros_triton, along with

the necessary components to perform encoding on images and decoding of U-Net’s output.

Note

For your specific application, these launch files may need to be modified. Consult the available components to see the configurable parameters.

Launch File |

Components Used |

|---|---|

|

|

|

UNetDecoderNode#

ROS Parameters#

ROS Parameter |

Type |

Default |

Description |

|---|---|---|---|

|

|

|

The image encoding of the colored segmentation mask. Supported values: |

|

|

|

Vector of integers where each element represents the RGB color hex code for the corresponding class |

|

|

|

The type of output that the network provides. Supported values: |

|

|

|

The width of the segmentation mask. |

|

|

|

The height of the segmentation mask. |

Note

The model output should be

NCHWorNHWC. In this context, theCrefers to the class.For the

network_output_type, thesoftmaxandsigmoidoption expects a single 32 bit floating point tensor. For theargmaxoption, a single signed 32 bit integer tensor is expected.Models with greater than 255 classes are not supported. If a class label greater than 255 is detected, this mask will be downcast to 255 in the raw segmentation.

ROS Topics Subscribed#

ROS Topic |

Interface |

Description |

|---|---|---|

|

The tensor that contains raw probabilities for every class in each pixel. |

Note

All input images are required to have height and width that are both an even number of pixels.

ROS Topics Published#

ROS Topic |

Interface |

Description |

|---|---|---|

|

The raw segmentation mask, encoded in mono8. Each pixel represents a class label. |

|

|

The colored segmentation mask. The color palette is user specified by the |