isaac_ros_rtdetr#

Source code available on GitHub.

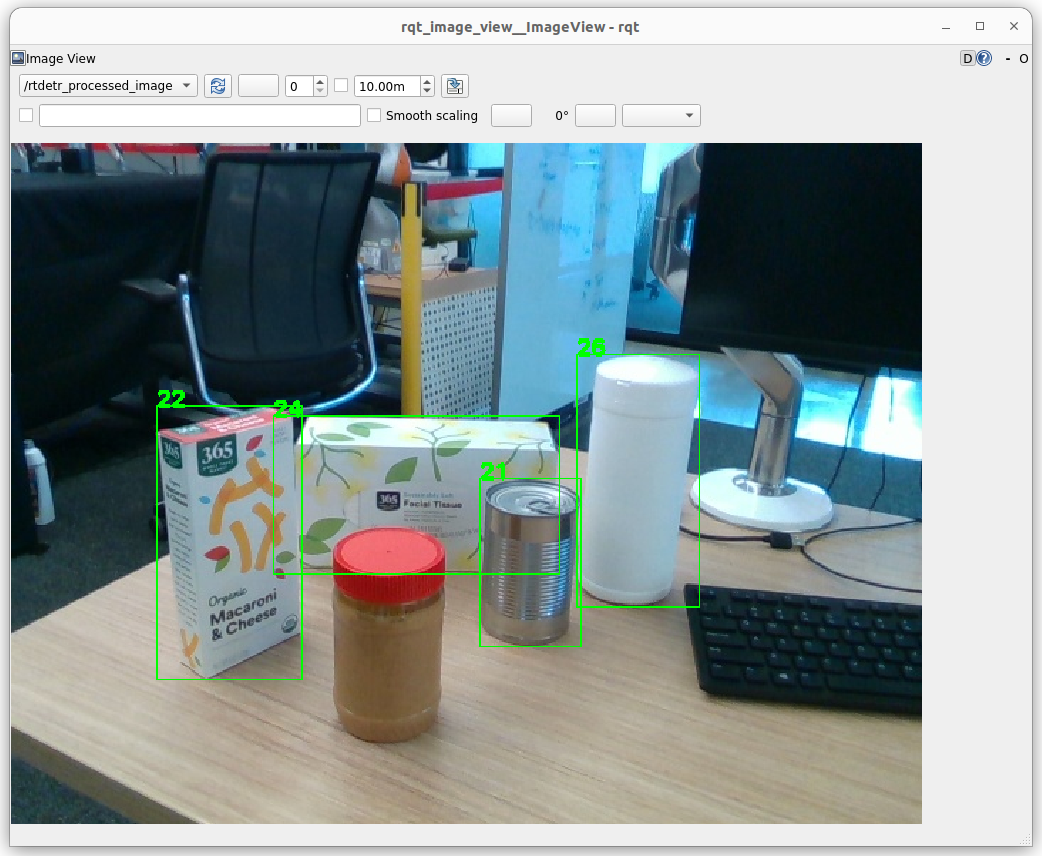

Stable object detections using SyntheticaDETR in a difficult scene with camera motion blur, round objects with few features, reflective object material, and light reflections#

Quickstart#

Set Up Development Environment#

Set up your development environment by following the instructions in getting started.

(Optional) Install dependencies for any sensors you want to use by following the sensor-specific guides.

Note

We strongly recommend installing all sensor dependencies before starting any quickstarts. Some sensor dependencies require restarting the development environment during installation, which will interrupt the quickstart process.

Download Quickstart Assets#

Download quickstart data from NGC:

Make sure required libraries are installed.

sudo apt-get install -y curl jq tar

Then, run these commands to download the asset from NGC:

NGC_ORG="nvidia" NGC_TEAM="isaac" PACKAGE_NAME="isaac_ros_rtdetr" NGC_RESOURCE="isaac_ros_rtdetr_assets" NGC_FILENAME="quickstart.tar.gz" MAJOR_VERSION=4 MINOR_VERSION=0 VERSION_REQ_URL="https://catalog.ngc.nvidia.com/api/resources/versions?orgName=$NGC_ORG&teamName=$NGC_TEAM&name=$NGC_RESOURCE&isPublic=true&pageNumber=0&pageSize=100&sortOrder=CREATED_DATE_DESC" AVAILABLE_VERSIONS=$(curl -s \ -H "Accept: application/json" "$VERSION_REQ_URL") LATEST_VERSION_ID=$(echo $AVAILABLE_VERSIONS | jq -r " .recipeVersions[] | .versionId as \$v | \$v | select(test(\"^\\\\d+\\\\.\\\\d+\\\\.\\\\d+$\")) | split(\".\") | {major: .[0]|tonumber, minor: .[1]|tonumber, patch: .[2]|tonumber} | select(.major == $MAJOR_VERSION and .minor <= $MINOR_VERSION) | \$v " | sort -V | tail -n 1 ) if [ -z "$LATEST_VERSION_ID" ]; then echo "No corresponding version found for Isaac ROS $MAJOR_VERSION.$MINOR_VERSION" echo "Found versions:" echo $AVAILABLE_VERSIONS | jq -r '.recipeVersions[].versionId' else mkdir -p ${ISAAC_ROS_WS}/isaac_ros_assets && \ FILE_REQ_URL="https://api.ngc.nvidia.com/v2/resources/$NGC_ORG/$NGC_TEAM/$NGC_RESOURCE/\ versions/$LATEST_VERSION_ID/files/$NGC_FILENAME" && \ curl -LO --request GET "${FILE_REQ_URL}" && \ tar -xf ${NGC_FILENAME} -C ${ISAAC_ROS_WS}/isaac_ros_assets && \ rm ${NGC_FILENAME} fi

Build isaac_ros_rtdetr#

Activate the Isaac ROS environment:

isaac-ros activateInstall the prebuilt Debian package:

sudo apt-get update

sudo apt-get install -y ros-jazzy-isaac-ros-rtdetr && \ sudo apt-get install -y ros-jazzy-isaac-ros-rtdetr-models-install

Download and set up (convert ONNX to TensorRT engine plan) the pre-trained SyntheticaDETR model:

sudo apt-get update

ros2 run isaac_ros_rtdetr_models_install install_rtdetr_models.sh --eula

Note

This quickstart uses the NVIDIA-produced

sdetr_graspSyntheticaDETR model, but Isaac ROS RT-DETR is compatible with all RT-DETR architecture models. For more about the differences between SyntheticaDETR and RT-DETR, see here.Note

The time taken to convert the ONNX model to a TensorRT engine plan varies across different platforms. On Jetson AGX Thor, for example, the engine conversion process can take up to 10-15 minutes to complete.

Install Git LFS:

sudo apt-get install -y git-lfs && git lfs install

Clone this repository under

${ISAAC_ROS_WS}/src:cd ${ISAAC_ROS_WS}/src && \ git clone -b release-4.0 https://github.com/NVIDIA-ISAAC-ROS/isaac_ros_object_detection.git isaac_ros_object_detection

Activate the Isaac ROS environment:

isaac-ros activateUse

rosdepto install the package’s dependencies:sudo apt-get update

rosdep update && rosdep install --from-paths ${ISAAC_ROS_WS}/src/isaac_ros_object_detection/isaac_ros_rtdetr --ignore-src -y

Download and set up (convert ONNX to TensorRT engine plan) the pre-trained SyntheticaDETR model:

sudo apt-get install -y ros-jazzy-isaac-ros-rtdetr-models-install && \ ros2 run isaac_ros_rtdetr_models_install install_rtdetr_models.sh --eula

Note

This quickstart uses the NVIDIA-produced

sdetr_graspSyntheticaDETR model, but Isaac ROS RT-DETR is compatible with all RT-DETR architecture models. For more about the differences between SyntheticaDETR and RT-DETR, see here.Note

The time taken to convert the ONNX model to a TensorRT engine plan varies across different platforms. On Jetson AGX Thor, for example, the engine conversion process can take up to 10-15 minutes to complete.

Build the package from source:

cd ${ISAAC_ROS_WS} && \ colcon build --symlink-install --packages-up-to isaac_ros_rtdetr --base-paths ${ISAAC_ROS_WS}/src/isaac_ros_object_detection/isaac_ros_rtdetr

Source the ROS workspace:

Note

Make sure to repeat this step in every terminal created inside the Isaac ROS environment.

Since this package was built from source, the enclosing workspace must be sourced for ROS to be able to find the package’s contents.

source install/setup.bash

Run Launch File#

Continuing inside the Isaac ROS environment, install the following dependencies:

sudo apt-get update

sudo apt-get install -y ros-jazzy-isaac-ros-examples

Run the following launch file to spin up a demo of this package using the quickstart rosbag:

ros2 launch isaac_ros_examples isaac_ros_examples.launch.py launch_fragments:=rtdetr interface_specs_file:=${ISAAC_ROS_WS}/isaac_ros_assets/isaac_ros_rtdetr/quickstart_interface_specs.json engine_file_path:=${ISAAC_ROS_WS}/isaac_ros_assets/models/synthetica_detr/sdetr_grasp.plan

Open a second terminal inside the Isaac ROS environment:

isaac-ros activateRun the rosbag file to simulate an image stream:

ros2 bag play -l ${ISAAC_ROS_WS}/isaac_ros_assets/isaac_ros_rtdetr/quickstart.bag

Ensure that you have already set up your RealSense camera using the RealSense setup tutorial. If you have not, please set up the sensor and then restart this quickstart from the beginning.

Continuing inside the Isaac ROS environment, install the following dependencies:

sudo apt-get update

sudo apt-get install -y ros-jazzy-isaac-ros-examples ros-jazzy-isaac-ros-realsense

Run the following launch file to spin up a demo of this package using a RealSense camera:

ros2 launch isaac_ros_examples isaac_ros_examples.launch.py launch_fragments:=realsense_mono_rect,rtdetr engine_file_path:=${ISAAC_ROS_WS}/isaac_ros_assets/models/synthetica_detr/sdetr_grasp.plan

Note

The sdetr_grasp model used in this quickstart is only trained on a specific subset of objects.

To run the quickstart with a live camera stream, you will need to procure and present one of the specific objects

listed in the appendix of the SyntheticaDETR model card.

Ensure that you have already set up your ZED camera using ZED setup tutorial.

Continuing inside the Isaac ROS environment, install dependencies:

sudo apt-get update

sudo apt-get install -y ros-jazzy-isaac-ros-examples ros-jazzy-isaac-ros-image-proc ros-jazzy-isaac-ros-zed

Run the following launch file to spin up a demo of this package using a ZED Camera:

ros2 launch isaac_ros_examples isaac_ros_examples.launch.py \ launch_fragments:=zed_mono_rect,rtdetr engine_file_path:=${ISAAC_ROS_WS}/isaac_ros_assets/models/synthetica_detr/sdetr_grasp.plan \ interface_specs_file:=${ISAAC_ROS_WS}/isaac_ros_assets/isaac_ros_rtdetr/zed2_quickstart_interface_specs.json

Note

If you are using the ZED X series, replace zed2_quickstart_interface_specs.json with zedx_quickstart_interface_specs.json in the above command.

Note

The sdetr_grasp model used in this quickstart is only trained on a specific subset of objects.

To run the quickstart with a live camera stream, you will need to procure and present one of the specific objects

listed in the appendix of the SyntheticaDETR model card.

Visualize Results#

Open a new terminal inside the Isaac ROS environment:

isaac-ros activateRun the RT-DETR visualization script:

ros2 run isaac_ros_rtdetr isaac_ros_rtdetr_visualizer.py

Open another terminal inside the Isaac ROS environment:

isaac-ros activateVisualize and validate the output of the package with

rqt_image_view:ros2 run rqt_image_view rqt_image_view /rtdetr_processed_image

After about 1 minute, your output should look like this:

Try More Examples#

To continue your exploration, check out the following suggested examples:

This package only supports models based on the RT-DETR architecture. Some of the supported RT-DETR models from NGC:

Model Name |

Use Case |

|---|---|

Model trained on 100% synthetic data for object classes that can be grasped by a standard robot arm |

|

Model trained on 100% synthetic data for object classes that are relevant to the operation of an Autonomous Mobile Robot |

To learn how to use these models, click here.

Troubleshooting#

Isaac ROS Troubleshooting#

For solutions to problems with Isaac ROS, see troubleshooting.

Deep Learning Troubleshooting#

For solutions to problems with using DNN models, see troubleshooting deeplearning.

API#

Usage#

ros2 launch isaac_ros_rtdetr isaac_ros_rtdetr.launch.py model_file_path:=<path to .onnx> engine_file_path:=<path to .plan> input_tensor_names:=<input tensor names> input_binding_names:=<input binding names> output_tensor_names:=<output tensor names> output_binding_names:=<output binding names> verbose:=<TensorRT verbosity> force_engine_update:=<force TensorRT update>

RtDetrPreprocessorNode#

ROS Parameters#

ROS Parameter |

Type |

Default |

Description |

|---|---|---|---|

|

|

|

The name of the encoded image tensor binding in the input tensor list. |

|

|

|

The name of the encoded image tensor binding in the output tensor list. |

|

|

|

The name of the target image size tensor binding in the output tensor list. |

|

|

|

The height of the original image, for resizing the final bounding box to match the original dimensions. |

|

|

|

The width of the original image, for resizing the final bounding box to match the original dimensions. |

ROS Topics Subscribed#

ROS Topic |

Interface |

Description |

|---|---|---|

|

The tensor that contains the encoded image data. |

ROS Topics Published#

ROS Topic |

Interface |

Description |

|---|---|---|

|

Tensor list containing encoded image data and image size tensors. |

RtDetrDecoderNode#

ROS Parameters#

ROS Parameter |

Type |

Default |

Description |

|---|---|---|---|

|

|

|

The name of the labels tensor binding in the input tensor list. |

|

|

|

The name of the boxes tensor binding in the input tensor list. |

|

|

|

The name of the scores tensor binding in the input tensor list. |

|

|

|

The minimum score required for a particular bounding box to be published. |

ROS Topics Subscribed#

ROS Topic |

Interface |

Description |

|---|---|---|

|

The tensor that represents the inferred aligned bounding boxes, labels, and scores. |

ROS Topics Published#

ROS Topic |

Interface |

Description |

|---|---|---|

|

Aligned image bounding boxes with detection class |