isaac_ros_detectnet

Source code on GitHub.

Quickstart

Set Up Development Environment

Set up your development environment by following the instructions in getting started.

Clone

isaac_ros_commonunder${ISAAC_ROS_WS}/src.cd ${ISAAC_ROS_WS}/src && \ git clone -b release-3.1 https://github.com/NVIDIA-ISAAC-ROS/isaac_ros_common.git isaac_ros_common

(Optional) Install dependencies for any sensors you want to use by following the sensor-specific guides.

Warning

We strongly recommend installing all sensor dependencies before starting any quickstarts. Some sensor dependencies require restarting the Isaac ROS Dev container during installation, which will interrupt the quickstart process.

Download Quickstart Assets

Download quickstart data from NGC:

Make sure required libraries are installed.

sudo apt-get install -y curl jq tar

Then, run these commands to download the asset from NGC:

NGC_ORG="nvidia" NGC_TEAM="isaac" PACKAGE_NAME="isaac_ros_detectnet" NGC_RESOURCE="isaac_ros_detectnet_assets" NGC_FILENAME="quickstart.tar.gz" MAJOR_VERSION=3 MINOR_VERSION=1 VERSION_REQ_URL="https://catalog.ngc.nvidia.com/api/resources/versions?orgName=$NGC_ORG&teamName=$NGC_TEAM&name=$NGC_RESOURCE&isPublic=true&pageNumber=0&pageSize=100&sortOrder=CREATED_DATE_DESC" AVAILABLE_VERSIONS=$(curl -s \ -H "Accept: application/json" "$VERSION_REQ_URL") LATEST_VERSION_ID=$(echo $AVAILABLE_VERSIONS | jq -r " .recipeVersions[] | .versionId as \$v | \$v | select(test(\"^\\\\d+\\\\.\\\\d+\\\\.\\\\d+$\")) | split(\".\") | {major: .[0]|tonumber, minor: .[1]|tonumber, patch: .[2]|tonumber} | select(.major == $MAJOR_VERSION and .minor <= $MINOR_VERSION) | \$v " | sort -V | tail -n 1 ) if [ -z "$LATEST_VERSION_ID" ]; then echo "No corresponding version found for Isaac ROS $MAJOR_VERSION.$MINOR_VERSION" echo "Found versions:" echo $AVAILABLE_VERSIONS | jq -r '.recipeVersions[].versionId' else mkdir -p ${ISAAC_ROS_WS}/isaac_ros_assets && \ FILE_REQ_URL="https://api.ngc.nvidia.com/v2/resources/$NGC_ORG/$NGC_TEAM/$NGC_RESOURCE/\ versions/$LATEST_VERSION_ID/files/$NGC_FILENAME" && \ curl -LO --request GET "${FILE_REQ_URL}" && \ tar -xf ${NGC_FILENAME} -C ${ISAAC_ROS_WS}/isaac_ros_assets && \ rm ${NGC_FILENAME} fi

Build isaac_ros_detectnet

Launch the Docker container using the

run_dev.shscript:cd ${ISAAC_ROS_WS}/src/isaac_ros_common && \ ./scripts/run_dev.sh

Install the prebuilt Debian package:

sudo apt-get install -y ros-humble-isaac-ros-detectnet ros-humble-isaac-ros-dnn-image-encoder ros-humble-isaac-ros-triton

Clone this repository under

${ISAAC_ROS_WS}/src:cd ${ISAAC_ROS_WS}/src && \ git clone -b release-3.1 https://github.com/NVIDIA-ISAAC-ROS/isaac_ros_object_detection.git isaac_ros_object_detection

Launch the Docker container using the

run_dev.shscript:cd ${ISAAC_ROS_WS}/src/isaac_ros_common && \ ./scripts/run_dev.sh

Use

rosdepto install the package’s dependencies:rosdep install --from-paths ${ISAAC_ROS_WS}/src/isaac_ros_object_detection --ignore-src -y

Build the package from source:

cd ${ISAAC_ROS_WS} && \ colcon build --symlink-install --packages-up-to isaac_ros_detectnet

Source the ROS workspace:

Note

Make sure to repeat this step in every terminal created inside the Docker container.

Since this package was built from source, the enclosing workspace must be sourced for ROS to be able to find the package’s contents.

source install/setup.bash

Run Launch File

Continuing inside the Docker container, run the quickstart setup script which will download the PeopleNet Model from NVIDIA GPU Cloud(NGC)

ros2 run isaac_ros_detectnet setup_model.sh --height 632 --width 1200 --config-file quickstart_config.pbtxt

Continuing inside the Docker container, install the following dependencies:

sudo apt-get install -y ros-humble-isaac-ros-examples

Run the following launch file to spin up a demo of this package using the quickstart rosbag:

ros2 launch isaac_ros_examples isaac_ros_examples.launch.py launch_fragments:=detectnet interface_specs_file:=${ISAAC_ROS_WS}/isaac_ros_assets/isaac_ros_detectnet/quickstart_interface_specs.json

Open a new terminal inside the Docker container:

cd ${ISAAC_ROS_WS}/src/isaac_ros_common && \ ./scripts/run_dev.sh

Run the rosbag file to simulate an image stream:

ros2 bag play -l ${ISAAC_ROS_WS}/isaac_ros_assets/isaac_ros_detectnet/rosbags/detectnet_rosbag --remap image:=image_rect camera_info:=camera_info_rect

Continuing inside the Docker container, run the quickstart setup script which will download the PeopleNet Model from NVIDIA GPU Cloud(NGC)

ros2 run isaac_ros_detectnet setup_model.sh --height 480 --width 640 --config-file realsense_config.pbtxt

Ensure that you have already set up your RealSense camera using the RealSense setup tutorial. If you have not, please set up the sensor and then restart this quickstart from the beginning.

Continuing inside the Docker container, install the following dependencies:

sudo apt-get install -y ros-humble-isaac-ros-examples ros-humble-isaac-ros-realsense

Run the following launch file to spin up a demo of this package using a RealSense camera:

ros2 launch isaac_ros_examples isaac_ros_examples.launch.py launch_fragments:=realsense_mono_rect,detectnet

Continuing inside the Docker container, run the quickstart setup script which will download the PeopleNet Model from NVIDIA GPU Cloud(NGC)

ros2 run isaac_ros_detectnet setup_model.sh --height 1200 --width 1920 --config-file hawk_config.pbtxt

Ensure that you have already set up your Hawk camera using the Hawk setup tutorial. If you have not, please set up the sensor and then restart this quickstart from the beginning.

Continuing inside the Docker container, install the following dependencies:

sudo apt-get install -y ros-humble-isaac-ros-examples ros-humble-isaac-ros-argus-camera

Run the following launch file to spin up a demo of this package using a Hawk camera:

ros2 launch isaac_ros_examples isaac_ros_examples.launch.py launch_fragments:=argus_mono,rectify_mono,detectnet

Ensure that you have already set up your ZED camera using ZED setup tutorial.

Continuing inside the Docker container, install dependencies:

sudo apt-get install -y ros-humble-isaac-ros-examples ros-humble-isaac-ros-stereo-image-proc ros-humble-isaac-ros-zed

Continuing inside the Docker container, run the quickstart setup script which will download the PeopleNet Model from NVIDIA GPU Cloud(NGC)

Note

If you are using the ZED X series, change resolution and replace zed2_config.pbtxt with zedx_config.pbtxt in the below command.

ros2 run isaac_ros_detectnet setup_model.sh --height 720 --width 1280 --config-file zed2_config.pbtxt

Run the following launch file to spin up a demo of this package using a ZED Camera:

ros2 launch isaac_ros_examples isaac_ros_examples.launch.py \ launch_fragments:=zed_mono_rect,detectnet \ interface_specs_file:=${ISAAC_ROS_WS}/isaac_ros_assets/isaac_ros_detectnet/zed2_quickstart_interface_specs.json

Note

If you are using the ZED X series, replace zed2_quickstart_interface_specs.json with zedx_quickstart_interface_specs.json in the above command.

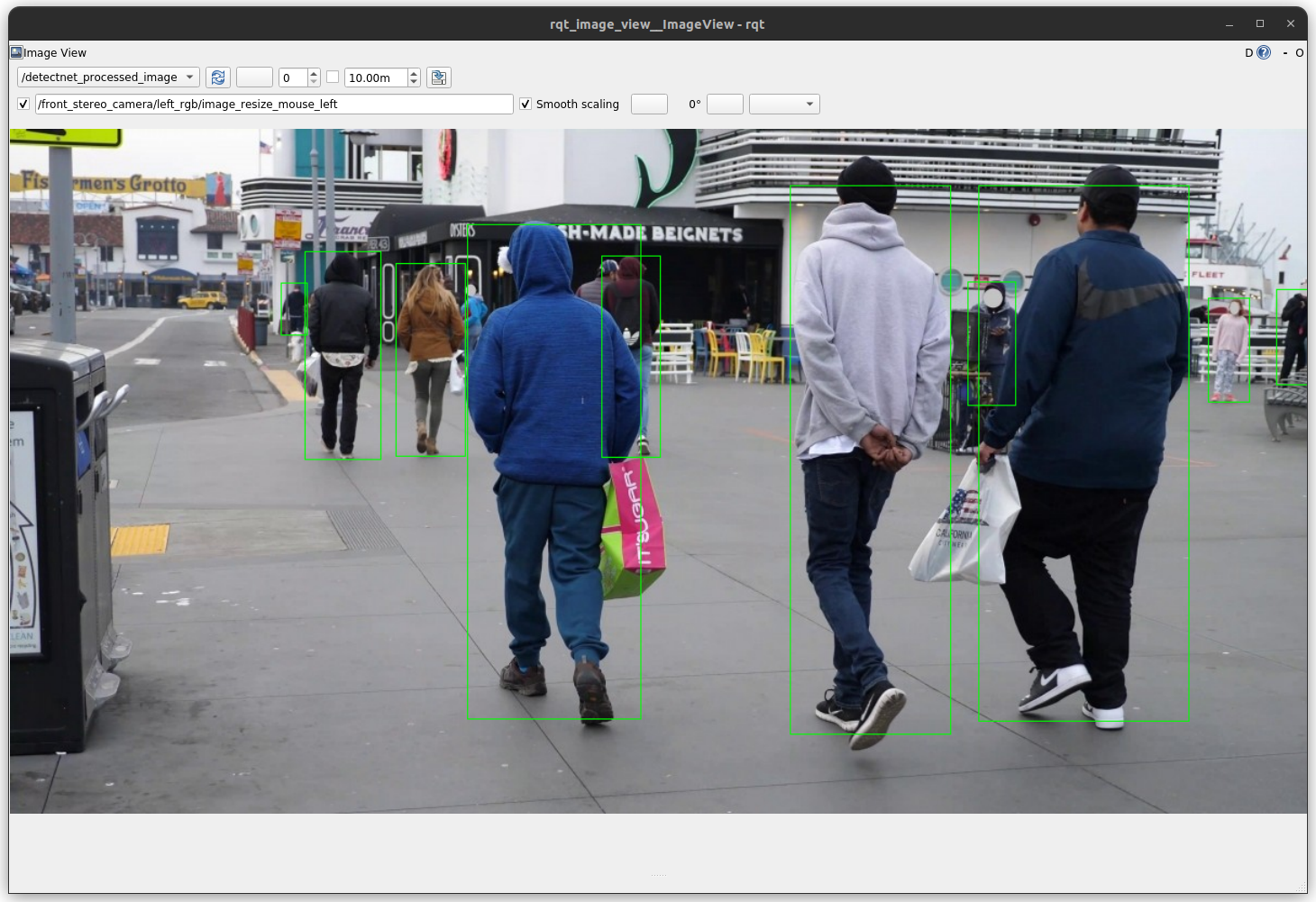

Visualize Results

Open a new terminal and run the

isaac_ros_detectnet_visualizer:cd ${ISAAC_ROS_WS}/src/isaac_ros_common && \ ./scripts/run_dev.sh

cd ${ISAAC_ROS_WS} && \ source install/setup.bash && \ ros2 run isaac_ros_detectnet isaac_ros_detectnet_visualizer.py --ros-args --remap image:=image_rect

Open a new terminal and run

rqt_image_viewto visualize the output:cd ${ISAAC_ROS_WS}/src/isaac_ros_common && \ ./scripts/run_dev.sh

ros2 run rqt_image_view rqt_image_view /detectnet_processed_image

You should see an output as shown below:

Try More Examples

To continue your exploration, check out the following suggested examples:

This package only supports models based on the Detectnet_v2 architecture. Some of the supported DetectNet models from NGC:

Model Name |

Use Case |

|---|---|

People counting with a mobile robot |

|

People counting, heatmap generation, social distancing |

|

Detect and track cars |

|

Identify objects from a moving object |

|

Detect faces in a dark environment with IR camera |

To learn how to use this models, click here.

ROS 2 Graph Configuration

To run the DetectNet object detection inference, the following ROS 2 nodes must be set up and running:

Isaac ROS DNN Image encoder: This takes an image message and converts it to a tensor (

TensorListisaac_ros_tensor_list_interfaces/TensorList) that can be processed by the network.Isaac ROS DNN Inference - Triton: This executes the DetectNet network and takes, as input, the tensor from the DNN Image Encoder.

Note

The Isaac ROS TensorRT package is not able to perform inference with DetectNet models at this time.

The output is a TensorList message containing the encoded detections. Use the parameters

model_nameandmodel_repository_pathsto point to the model folder and set the model name. The.planfile should be located at$model_repository_path/$model_name/1/model.planIsaac ROS Detectnet Decoder: This node takes the TensorList with encoded detections as input, and outputs

Detection2DArraymessages for each frame. See the following section for the parameters.

Troubleshooting

Isaac ROS Troubleshooting

For solutions to problems with Isaac ROS, see troubleshooting.

Deep Learning Troubleshooting

For solutions to problems with using DNN models, see troubleshooting deeplearning.

API

Usage

ros2 launch isaac_ros_detectnet isaac_ros_detectnet.launch.py label_list:=<list of labels> enable_confidence_threshold:=<enable confidence thresholding> enable_bbox_area_threshold:=<enable bbox size thresholding> enable_dbscan_clustering:=<enable dbscan clustering> confidence_threshold:=<minimum confidence value> min_bbox_area:=<minimum bbox area value> dbscan_confidence_threshold:=<minimum confidence for dbscan algorithm> dbscan_eps:=<epsilon distance> dbscan_min_boxes:=<minimum returned boxes> dbscan_enable_athr_filter:=<area-to-hit-ratio filter> dbscan_threshold_athr:=<area-to-hit ratio threshold> dbscan_clustering_algorithm:=<choice of clustering algorithm> bounding_box_scale:=<bounding box normalization value> bounding_box_offset:=<XY offset for bounding box>

ROS Parameters

ROS Parameter |

Type |

Default |

Description |

|---|---|---|---|

|

|

|

The list of labels. These are loaded from |

|

|

|

The min value of confidence used to threshold detections before clustering |

|

|

|

The min value of bounding box area used to threshold detections before clustering |

|

|

|

Holds the epsilon to control merging of overlapping boxes. Refer to OpenCV |

|

|

|

Holds the epsilon to control merging of overlapping boxes. Refer to OpenCV |

|

|

|

The minimum number of boxes to return. |

|

|

|

Enables the area-to-hit ratio (ATHR) filter. The ATHR is calculated as: ATHR = sqrt(clusterArea) / nObjectsInCluster. |

|

|

|

The |

|

|

|

The clustering algorithm selection. ( |

|

|

|

The scale parameter, which should match the training configuration. |

|

|

|

Bounding box offset for both X and Y dimensions. |

ROS Topics Subscribed

ROS Topic |

Interface |

Description |

|---|---|---|

|

The tensor that represents the inferred aligned bounding boxes. |

ROS Topics Published

ROS Topic |

Interface |

Description |

|---|---|---|

|

Aligned image bounding boxes with detection class. |